Why “We’ve Survived Before” Is the Most Dangerous Argument

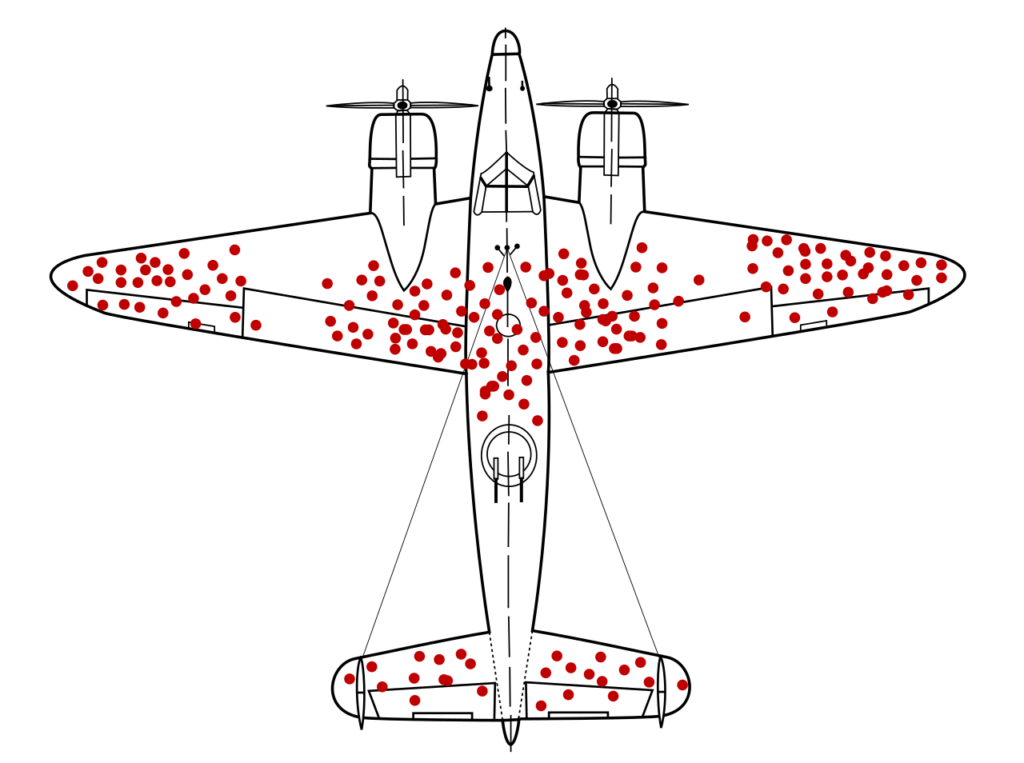

There’s a famous image from World War II, a diagram of a bomber covered in red dots, each one marking where returning planes had been hit by enemy fire.

The military’s first instinct was obvious: reinforce the areas with the most bullet holes. Protect the parts that keep getting shot.

But a statistician named Abraham Wald saw it differently. He said: reinforce the areas with no bullet holes. Because the planes that got hit there didn’t make it home. You’re only seeing the survivors.

This is survivorship bias. And it’s the same logic people use when they dismiss warnings about climate change, AI, or any other existential threat facing humanity in 2026.

“People have always predicted the end of the world,” they say. “The Cold War. The ozone layer. Y2K. And we’re still here.”

They’re looking at the bullet holes on the planes that made it home. They’re not looking at the ones that went down.

“Doomers Are Always Wrong”

I keep hearing variations of the same argument, usually from people who consider themselves rational optimists.

“Every generation thinks they’re living through the end times. The Cold War generation thought nuclear annihilation was inevitable. Boomers were terrified of the ozone hole. Millennials panicked about Y2K. Gen X had acid rain and overpopulation. And yet, here we are. Humanity persists.”

The conclusion they draw: Scientists and activists are professional alarmists. They need to justify their grants, their relevance, their worldview. So they manufacture crises. And when those crises don’t materialise, they move on to the next one.

Climate change? Just the latest moral panic. AI risk? Science fiction for people who watch too much Black Mirror.

This argument sounds reasonable. It sounds measured. It sounds like the kind of thing a smart person would say to distinguish themselves from the hysterics.

It’s also completely wrong.

The Threats Were Real. We Just Did Something About Them.

Let’s take the Cold War. The threat of nuclear annihilation wasn’t a false alarm, it was averted disaster. The Cuban Missile Crisis brought us thirteen days from potential extinction. We survived because Kennedy and Khrushchev blinked. Because back-channel diplomacy worked. Because people got lucky.

But it wasn’t just luck. An entire generation took the threat seriously. They protested. They built fallout shelters. They voted for politicians who supported de-escalation and arms treaties. The anti-nuclear movement wasn’t hysterical it was responding to a legitimate existential threat. And it worked. We got the Partial Test Ban Treaty, SALT I and II, eventually the INF Treaty.

The threat was real. We treated it as real. And we’re still here because of that, not in spite of it.

The ozone layer? Same story. Scientists discovered that chlorofluorocarbons were ripping a hole in our atmospheric shield. The evidence was clear. Governments didn’t dismiss it as alarmism instead they acted. The Montreal Protocol banned CFCs globally in 1987. The ozone layer is recovering. We solved it.

Smallpox? A disease that killed 300 million people in the 20th century alone. We didn’t shrug and say “people have always died of diseases.” We coordinated a global vaccination campaign and completely eradicated it by 1980. It’s gone. We did that.

Even the World Wars led to the creation of the United Nations, international law, and frameworks designed to prevent it from happening again. The threat of endless global conflict was real, we built systems to contain it.

So What’s Different Now?

Here’s the pattern: threat emerges, scientists sound the alarm, society responds, disaster averted.

Except that’s not what’s happening with climate change. Or AI development. Or COVID-19. Or any of the current existential threats we’re facing in 2026.

We’re not protesting. We’re not building treaties. We’re not banning the dangerous thing. We’re doing the opposite.

We’re voting for governments that open new coal mines. We’re accelerating AI development without safeguards because whoever builds it first wins the economic race. We’re experiencing a mass-disabling pandemic. We’re watching the food supply destabilise, watching mass migration begin, watching the early warning signs flash red and we’re telling ourselves it’s fine because our parents were worried about nuclear war and didn’t die in a nuclear blast.

But our parents did care. They protested the arms race. They built those doomsday bunkers. They voted for parties that supported de-escalation. They took the threat seriously, and that’s why they survived it.

We’re aware of the threat. Nearly every climate scientist agrees it’s coming. The AI researchers building the systems are publishing open letters begging for regulation. And we’re… scrolling past it. Calling them doomers. Assuming it will work itself out because it always has before.

Except it hasn’t “always worked out.” We worked it out. And this time, we’re not working.

The Planes That Didn’t Come Home

Survivorship bias works because the evidence of failure is invisible. The planes that got shot in the engine aren’t in the hangar for you to study. They’re at the bottom of the ocean.

When someone tells you “humanity has always survived,” they’re right. But they’re only seeing the timeline where we made it. They’re not seeing the ones where we didn’t because in those timelines, there’s no one left to have the conversation.

Every time we survived an existential threat, it wasn’t inevitable. It wasn’t destiny. It was work, luck, and people taking the threat seriously enough to act.

The Cold War could have gone nuclear. The ozone hole could have kept growing. Smallpox could still be killing millions. We’re not living in the timeline where those things happened because people before us refused to accept “it’ll probably be fine” as a strategy.

Climate collapse will disrupt food supplies and trigger mass migration on a scale we’ve never seen. AI development is accelerating without meaningful oversight, built by companies racing each other to deployment. These aren’t hypothetical future problems they’re present-tense crises with visible early warnings.

And we’re treating them like the boy who cried wolf. Except the boy was right. The wolf is here. We’re just so used to hearing the warning that we’ve stopped listening.

The bullet holes are showing us where we can afford to get hit. The empty spaces are showing us where we can’t.

We’re flying toward the engine shots. And this time, we’re not reinforcing anything.