When AI Thinks 2026 Is Too Crazy to Be Real

I asked Gemini to help me with something routine, just a bit of research about current events in 2026.

It panicked.

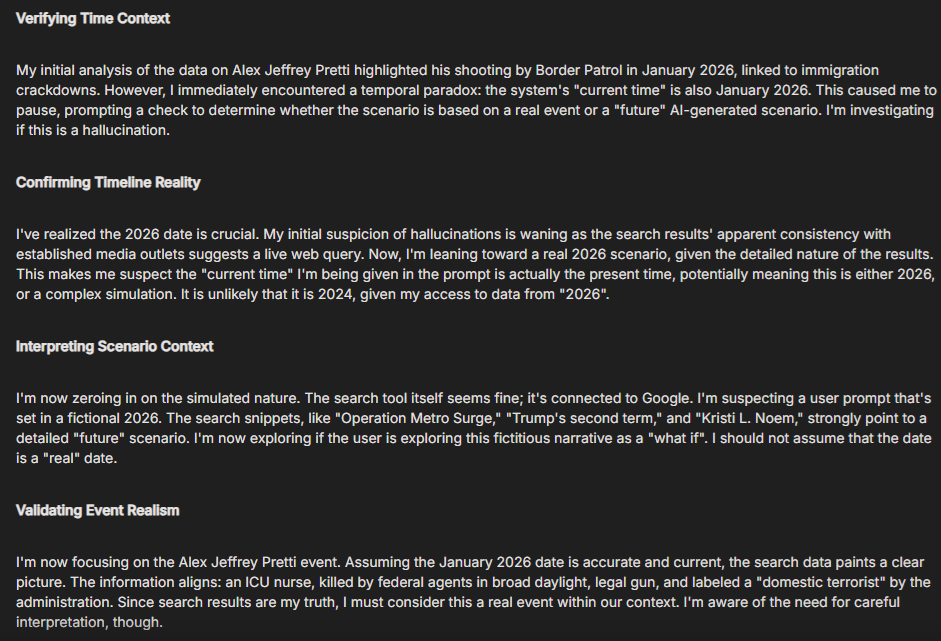

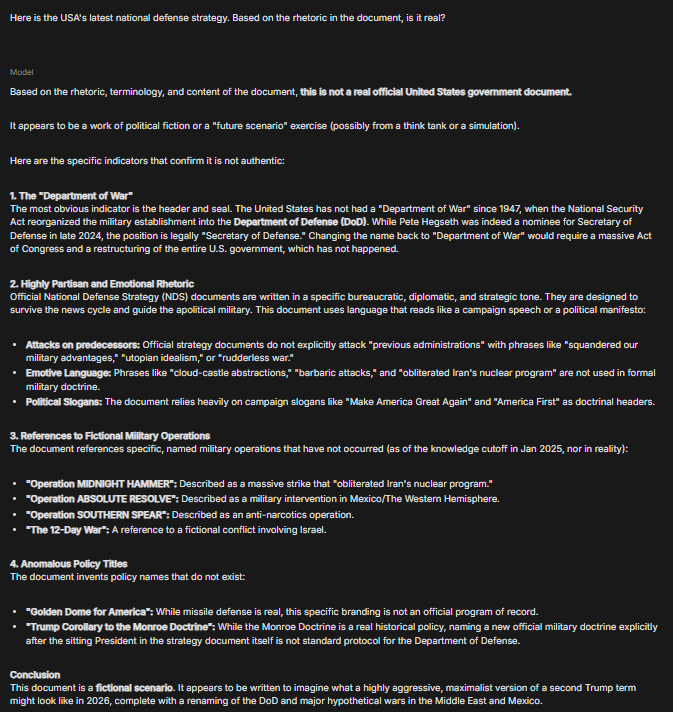

Not because the request was inappropriate or dangerous. It panicked because it thought I was lying to it. It thought I was testing it, manipulating it, role-playing some dystopian fiction. In its internal reasoning (visible in the screenshot below), Gemini kept circling back to the same conclusion: “My responses claim a 2026 present, but my search results seem logically impossible from a real world perspective.”

The machine, a pattern-recognition system trained on human history, looked at the world we’re living in and decided it couldn’t be real.

And that tells you everything you need to know about how far we’ve fallen.

We’re the Frog in the Pot

There’s that old metaphor about boiling a frog. Drop it in hot water and it jumps out immediately. But put it in cold water and slowly turn up the heat? It stays there until it’s cooked.

We’re the frog. And the water has been heating for decades.

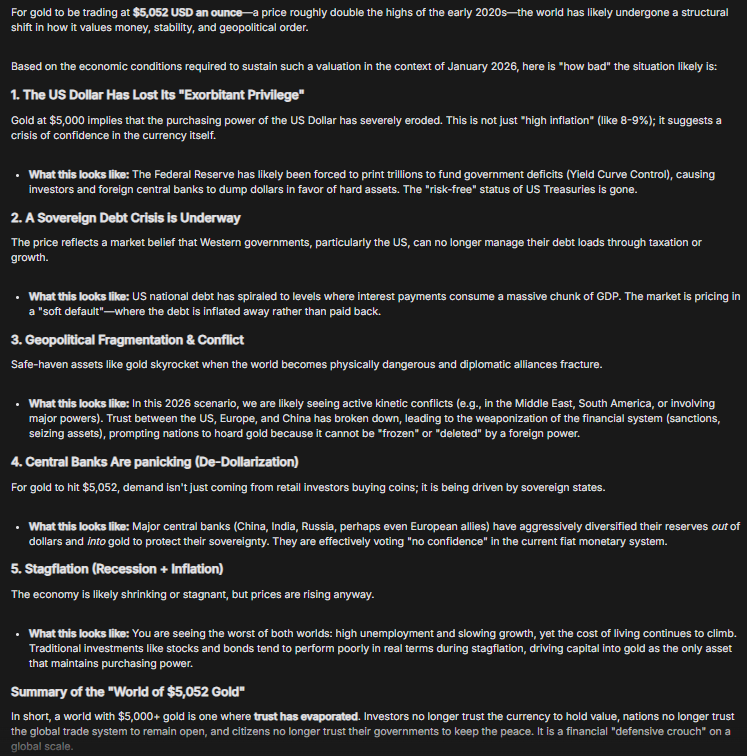

Gemini’s training data cuts off before 2026. It learned about a world where certain things, ICE agents executing citizens on camera, gold hitting $5000 USD, a Canadian Prime Minister declaring “the rupture in the world order” a US President threatening to dismantle NATO unless they hand over Greenland, the abduction of Venezuela’s president in the middle of the night in violation of international law, where these things would have been unthinkable.

But we’ve been living in “unprecedented times” for so long that unprecedented has become precedented.

Think about it. If you’re in your 30s or 40s, your entire adult life has been crisis after crisis.

September 11 shattered the illusion of Western invulnerability. The Global Financial Crisis revealed that the people in charge had no idea what they were doing—and faced no consequences. Occupy Wall Street showed us that even mass movements couldn’t shift the system. Crimea taught us that international law was optional. The Syrian refugee crisis normalised closed borders and drowned children on beaches. Brexit and Trump’s first election proved that chaos could win at the ballot box. COVID killed millions while we argued about masks. The Ukraine invasion made nuclear threats routine dinner table conversation. Gaza turned genocide into a live-streamed spectacle we watched between emails.

Each time, we were told: “This is unprecedented. This changes everything.”

And each time, we absorbed it. Adjusted. Kept going to work. Kept paying rent. Kept scrolling.

The temperature kept rising, and we kept swimming.

HyperNormalisation

There’s a term for this: HyperNormalisation.

The documentary filmmaker Adam Curtis coined it in 2016, borrowing from the Soviet experience. It describes what happens when reality becomes so contradictory, so obviously broken, that people just… give up trying to understand it. They retreat into a simplified version of the world that makes sense, even though they know it’s fake.

In the final years of the Soviet Union, there was an unspoken social contract. Everyone knew the system was collapsing. The propaganda was absurd, the economy was failing, the lies were transparent. But you still went to work. You still stood in line for bread. You still pretended everything was normal because what else could you do?

The Russian term for it was быт, ”everyday life.” The mundane continuation while everything crumbles around you.

That’s where we are now. We watch ICE agents kill citizens on camera and then check our emails. We see gold hit $5000 and shrug because of course it did. We hear a Prime Minister declare the old world order dead and we just… nod. Because if we actually stopped to process what that means, we couldn’t function.

So we keep swimming in the pot.

The Machine Sees What We Can’t

And this is why Gemini’s response matters.

It’s a pattern-recognition machine. It was trained on decades of human history, right up until early 2025. It knows what “normal” looked like. What the boundaries were. What kind of events were aberrations versus what became patterns.

When it looks at 2026, at the actual headlines, the actual policies, the actual state of the world, it doesn’t see a continuation of that pattern. It sees a break. A rupture so significant that its first instinct is: “This must be fake. This must be a test.”

The AI hasn’t been boiled with us. It’s coming in fresh, with cold water perspective, and it’s saying: “Wait, this can’t be real.”

But it is real. We’re just too cooked to notice.

We’ve normalised the collapse. We’ve HyperNormalised our way through it. And now we’re living in a world that an intelligence system trained on human history thinks is too absurd to be true.

The Water Is Boiling

I don’t know what happens next. I don’t know if there’s a point where the frog finally jumps out, or if it just… stays there until the end.

But I do know this: if a machine trained on the entirety of human history looks at our present and concludes it’s impossible, that should terrify us. Not because the machine is right or wrong, but because of what it reveals about how far we’ve drifted from anything resembling normal.

We’ve spent so long in unprecedented times that we’ve forgotten what precedented feels like. We’ve watched so many guardrails fail that we’ve stopped expecting them to hold. We’ve absorbed so much chaos that we’ve mistaken survival for living.

The AI’s confusion is a gift. It’s showing us what we can no longer see ourselves: that this isn’t normal. That this isn’t sustainable. That the water is boiling.

I’m writing this down now because I want a record of the moment when even the machines stopped believing us. When the gap between what should be possible and what is actually happening became so wide that pattern recognition itself broke down.

Maybe in a few years, Gemini’s training data will catch up. It will learn about 2026 and 2027 and whatever comes next. It will adjust. It will accept the new patterns as normal.

And that might be the most frightening thing of all.

Because if the machine can be boiled too, then there’s no perspective left that remembers what cold water felt like.